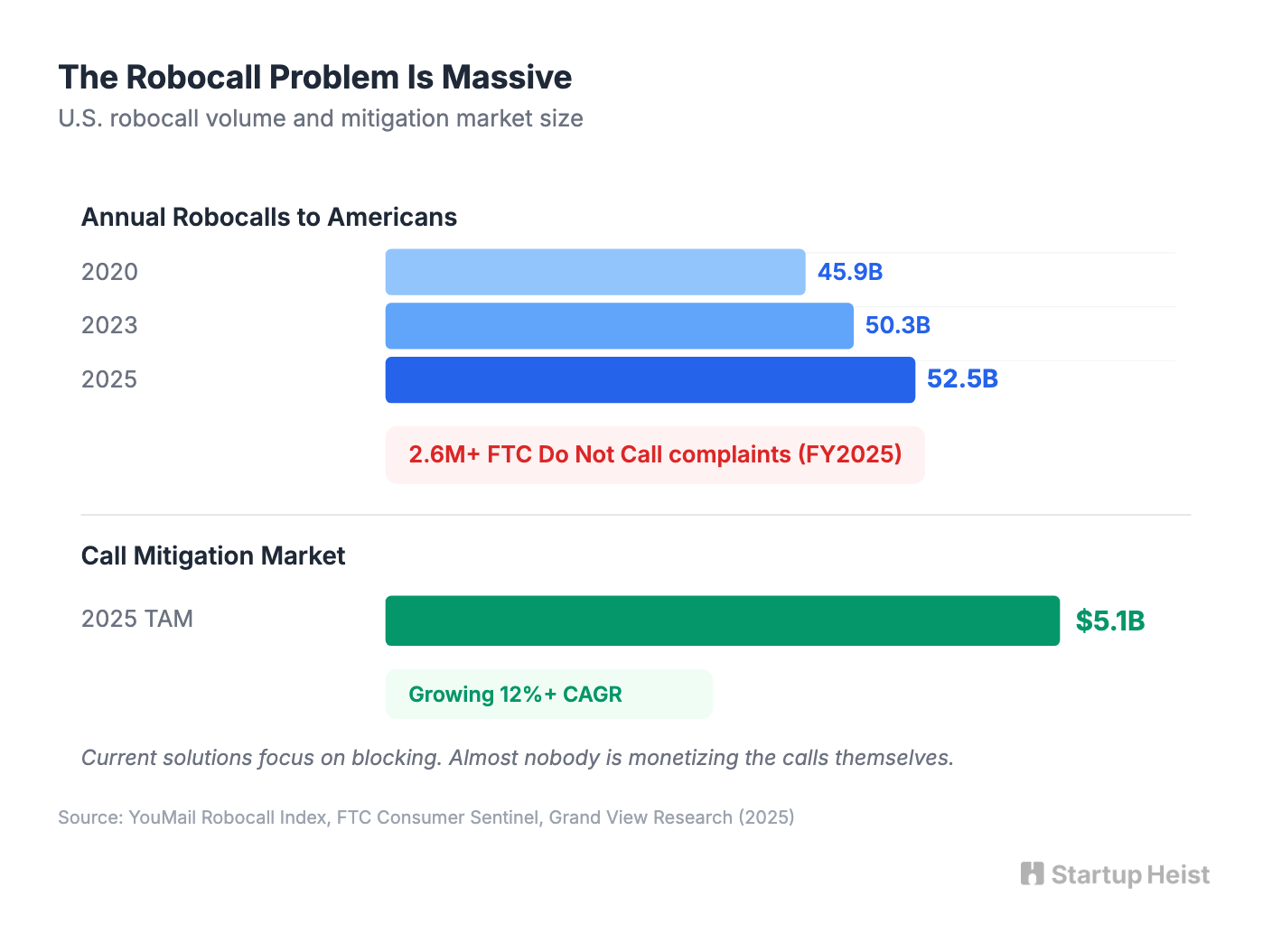

Americans got hit with 52.5 billion robocalls in 2025. The FTC logged over 2.6 million Do Not Call complaints in fiscal year 2025 alone. You already know this problem. What you might not have considered is the product sitting inside it: not another call blocker, but an AI persona that answers suspicious calls, drags the scammer into a long, absurd conversation, and turns the transcript into a shareable clip. A Scam-Waster Bot. Part revenge, part entertainment, part creator tool.

The obvious version is a novelty consumer app. That works, but consumer willingness to pay for scam call revenge is low. Jolly Roger Telephone Company, the clearest proof of concept, generates roughly $1.2 million in annual revenue at around $24 per year per subscriber. It now runs its bots on ChatGPT. That validates demand while revealing the ceiling on mass-market ARPU.

The business worth building goes further.

The money: 1,000 creator subscribers at $24/month = $24K MRR, with a viral free tier feeding the funnel. Jolly Roger already does $1.2M/year on consumer alone.

Inside:

• Full MVP scope and unit economics

• Two-layer pricing: consumer to creator

• Creator-first GTM with outreach templates

• Four compounding moats worth building

Why This Works Now

Telephony APIs, streaming speech-to-text, fast LLMs, and decent synthetic voices are all commodity in 2026. An indie founder can orchestrate the full scambaiting loop without building deep infrastructure: suspicious call comes in, AI persona answers, scammer wastes time, transcript becomes a clip, user laughs, shares, and invites others.

Regulators are tightening the space. On February 8, 2024, the FCC ruled that AI-generated voices in robocalls count as "artificial or prerecorded" under the TCPA, making AI voice scams explicitly illegal. Enforcement will intensify. Public frustration with scam calls is growing, and with it, receptivity to products that channel that frustration into something satisfying.

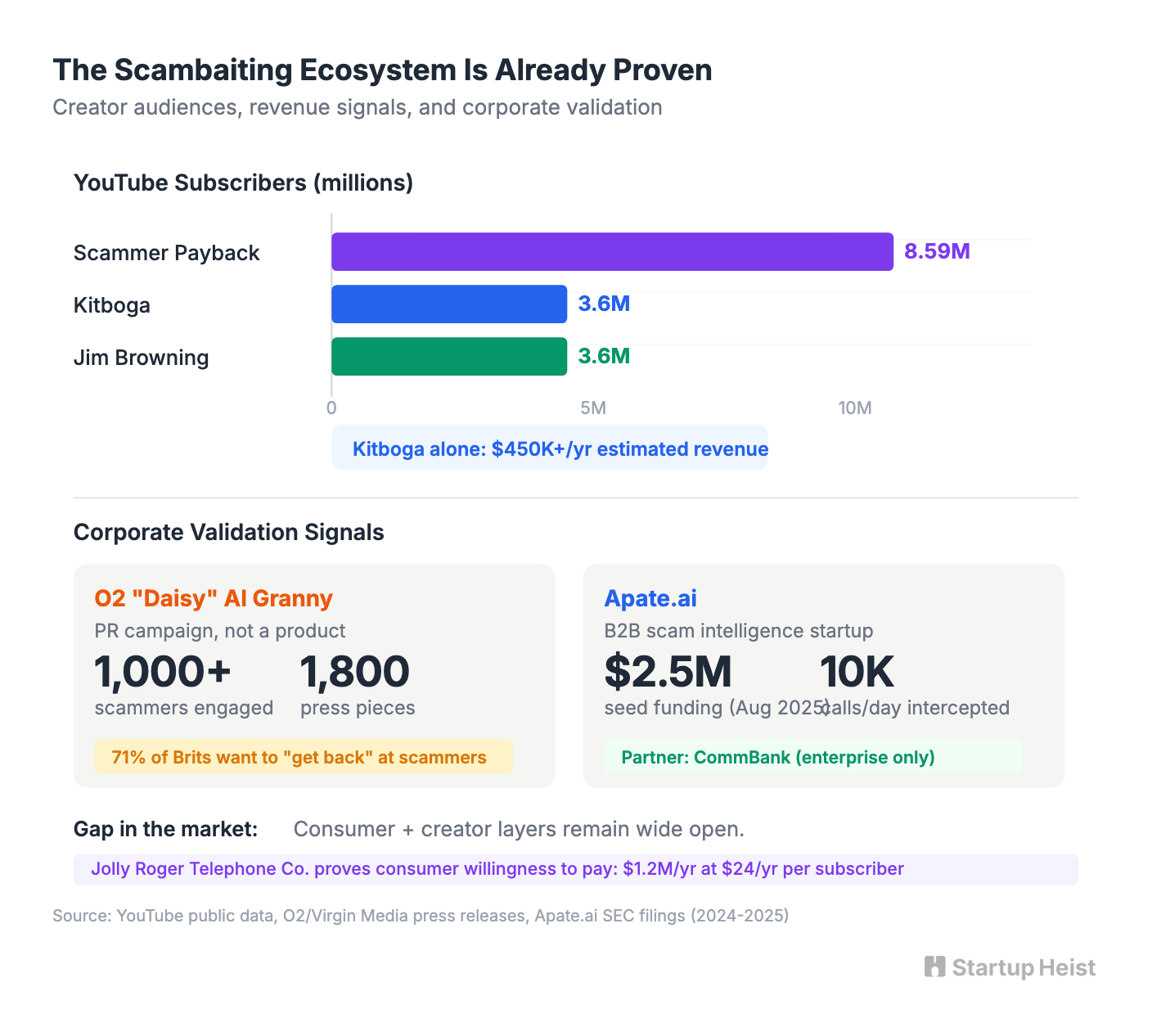

The scambaiting creator niche is already proven and profitable. Scammer Payback has 8.59 million YouTube subscribers and over 1.25 billion views. Kitboga has 3.6 million subscribers and earns an estimated $450,000 or more annually. Jim Browning collaborates directly with law enforcement and telecom companies. These creators demonstrate massive audience demand for scammer entertainment and a painfully manual production process. A tool that automates the call-handling and clip pipeline is genuine infrastructure for this niche.

Who's Already Proving the Model

Virgin Media O2 showed how powerful the emotional positioning is. In late 2024, they launched Daisy, an AI "granny" persona built as a PR campaign to waste scammers' time. Daisy engaged over 1,000 scammers, kept some on the line for up to 40 to 50 minutes, earned 1,800 pieces of global media coverage rated 100% positive, and won the 2025 Marketing Week Award for Best Use of AI. O2's internal research showed 71% of surveyed Brits wanted a way to "get back" at scammers. The emotional framing outperformed any security messaging they'd tried. Daisy was a marketing campaign with roughly £20K in media spend, not a commercial product, but it validated the viral wedge perfectly.

Meanwhile, Australian startup Apate.ai raised $2.5 million in seed funding in August 2025 and partnered with CommBank to deploy thousands of AI "victim bots" against scammers across voice and SMS channels, extracting conversation intelligence that telcos and banks will pay for. One partner reportedly uses Apate bots to intercept nearly 10,000 scam calls per day. Apate validates the B2B intelligence play but operates enterprise-only. The consumer and creator layers remain wide open.

The Moat

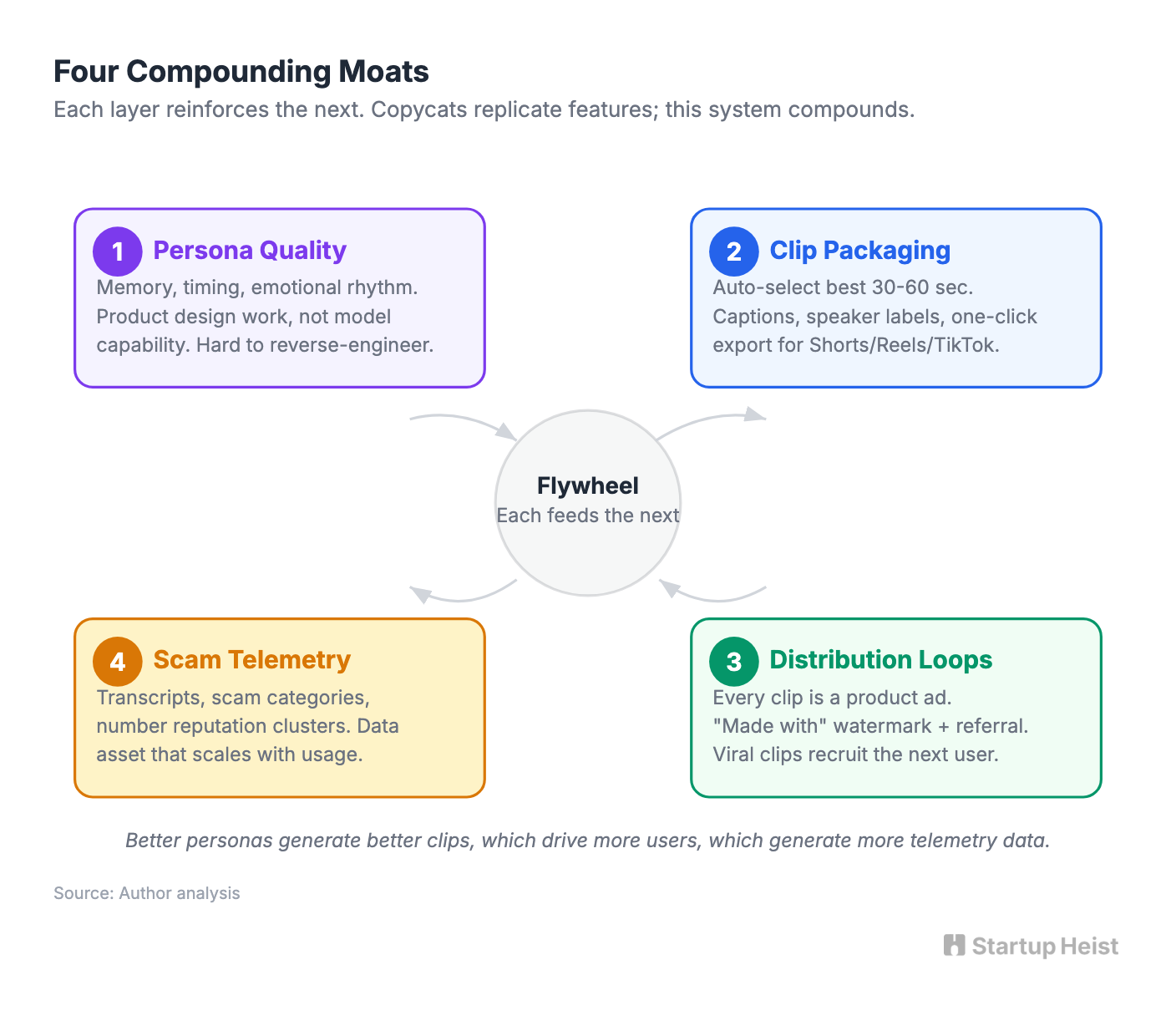

"AI granny wastes scammer time" gets copied in a weekend hackathon. Defensibility comes from somewhere else entirely.

1. Persona quality. Most copycats will build generic bots. The winner builds personas that are genuinely entertaining and stable under conversational pressure: confused retiree, paranoid crypto guy, overfriendly church auntie, suspicious landlord, bored HOA president. Good personas need memory, timing, emotional rhythm, and escalation logic. That's product design work, not model capability.

2. Clip packaging. Whoever turns raw scam calls into postable content fastest wins the creator market. Auto-select the funniest 30 to 60 seconds. Burn captions. Add speaker labels. One-click exports for Shorts, Reels, and TikTok.

3. Distribution loops. Every exported clip carries a subtle "made with" watermark or referral mechanic. Viral clips recruit the next user. Low-priced utilities need organic growth to survive.

4. Structured scam telemetry. Store transcripts, scam angle categories, language patterns, number reputation clusters, and "minutes wasted" stats. Over time you build a data asset competitors can't replicate without operating at scale. That telemetry can later support B2B products for carriers, security vendors, or journalists.

The Play

The business splits into two layers:

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”