The Heist: A federal agency just handed you the seed data for a category-defining product in regulated advertising.

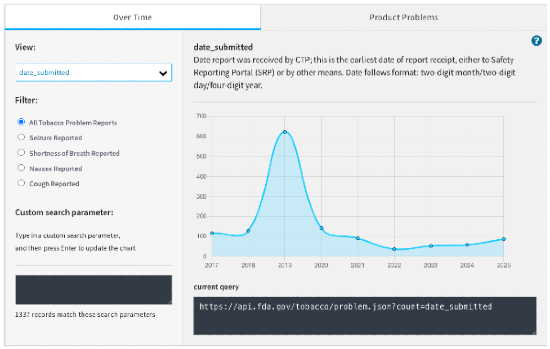

On February 17, 2026, openFDA published two new datasets: Tobacco Digital Ads Research and Tobacco Prevention Ads Research. Both are available via API, downloadable as JSON, and broken into machine-readable fields that decompose creative ads into analyzable components: formats, channels, messaging structures. Most people will read this as "FDA released another research dataset." The better read: a federal agency just gave you structured training data for one of the hardest problems in modern marketing — getting attention in categories where a single bad phrase, one youth-coded visual, or one unsupported claim can get your ad pulled, your account disabled, or a regulator interested.

That data is a wedge into something much bigger.

The product worth building is a Regulated Attention OS — an AI-powered compliance and creative intelligence tool that helps advertisers in sensitive categories answer two questions faster than anyone else. First: what creative patterns reliably earn attention in restricted markets? Second: which words, claims, formats, and contexts are likely to trigger platform rejection or regulatory scrutiny?

If you've been hunting for B2B SaaS ideas at the intersection of AI and advertising compliance, this is an unbeatable wedge. The customer base spans nicotine-adjacent brands, alcohol marketers, supplement companies, gambling operators and their affiliates, agencies servicing any of those accounts, and the growing wave of AI creative tools that need a compliance layer bolted on.

Nobody owns the creator-facing version of this workflow yet. Regulation is becoming more structured at the exact moment ad creation is becoming more automated. openFDA gives you one clean seed dataset. The market gives you the pain.

Why This Is Real

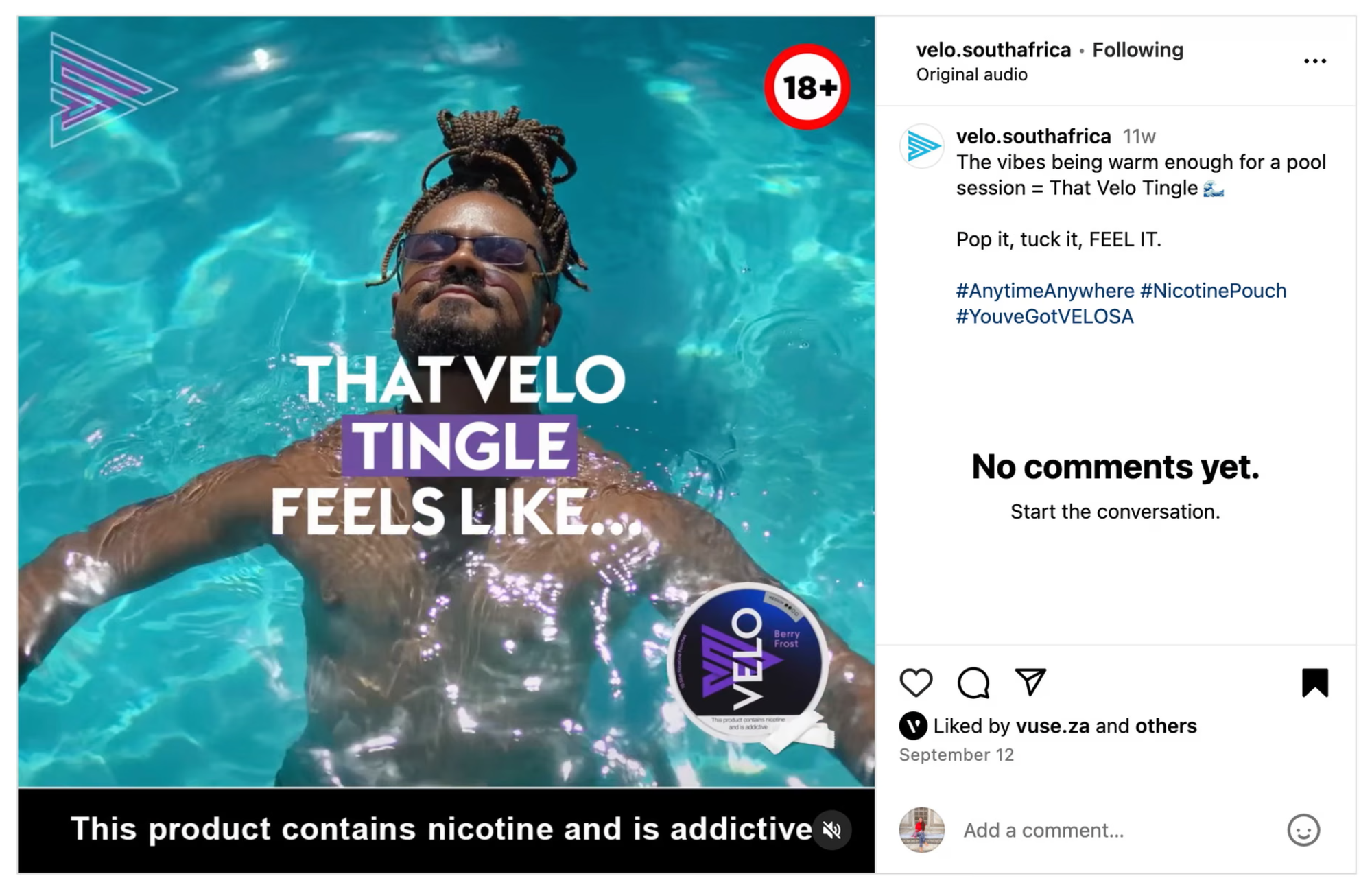

A 2024 JAMA Network Open study examined 2,071 Instagram posts from 25 synthetic nicotine e-cigarette brands. Only 13% adhered to FDA health warning requirements. Posts advertising flavored products drew more likes and comments, while posts with health warnings received measurably fewer — particularly when the warning occupied more visual area. Truth Initiative reported that nearly 90% of Instagram posts by tobacco companies violated either federal warning rules or youth-marketing guidance, with many posts using youth-appealing visuals and tech-forward aesthetics. Research from Boston University and UPenn confirmed the same pattern.

The takeaway: the things that improve compliance actively reduce engagement. Brands in restricted categories aren't dealing with a checkbox problem. They're dealing with an optimization problem where the legal requirement and the performance incentive pull in opposite directions. That tension recurs every campaign cycle, and it's exactly what software can price against.

TTB monitors alcohol advertising for mandatory information, false claims, health-related statements, and misleading depictions, and explicitly offers a voluntary pre-clearance review service. On March 2, 2026, TTB published guidance specifically warning alcohol advertisers to review AI-generated imagery for compliance — ensuring AI imagery doesn't mislead on product characteristics and includes all mandatory information. When regulators start writing guidance for AI-made ads, you're stepping into a live workflow problem.

Gambling adds a third enforcement surface. On March 23, 2026, Google Ads is updating its Gambling and Games policy with new certification requirements: all accounts must demonstrate "good policy health," and Manager Accounts with significant policy violations will lose certification privileges entirely. That means chronic violators lose ad access at the account and MCC level — compliance is now directly tied to distribution and revenue. Meta prohibits tobacco and nicotine promotion outright and restricts alcohol and gambling under separate policy regimes. The compliance cliff is already embedded in the media-buying environment.

AI is compounding the problem from the other direction. When the content engine speeds up but the regulatory surface stays the same or tightens, the gap between "what gets made" and "what gets approved" widens. That gap is your market.

The Insight

Most founders will treat this as a compliance product. That framing is too small.

Compliance and persuasion are built from the same atoms. Youth appeal, glamour, health framing, urgency, authority, aspirational identity, flavor language, transformation language, "cleaner alternative" positioning, testimonial logic, before/after structure. These aren't just legal categories. They're persuasion primitives. Every effective ad in a restricted category uses some combination of them. Every regulator and platform policy is specifically watching for the same combination.

Build a taxonomy that maps those primitives to both performance outcomes and risk surfaces, and you're no longer selling "please don't get sued." You're selling "keep the hook, lose the landmine." The customer doesn't want a compliance lecture. They want a rewrite that preserves conversion energy while reducing rejection probability. That reframing — from legal gatekeeper to creative accelerator — is what separates a tool growth teams actually adopt from one that just makes lawyers comfortable.

The openFDA datasets matter beyond the data itself. The example outputs show structured creative descriptors covering ad formats and channels — Instagram reels in-feed video ads, Instagram stories video ads, YouTube preroll formats. That structure is enough to begin labeling pattern families, creative contexts, and risk profiles. The first moat isn't the data. It's the cross-category ontology you build on top of it.

What The Product Actually Looks Like

Three layers.

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”