Anthropic shipped a feature last week that tells you more about where AI is headed than any model benchmark. Claude now lets users import saved context from rival assistants — ChatGPT, Gemini, Copilot — through a simple copy-paste workflow. You paste a pre-written prompt into your old chatbot, copy the output, feed it into Claude's settings, and wait up to 24 hours while Claude digests your digital identity.

It's not a polished interoperability standard. It's a live admission that memory portability is strategically important, and nobody has built the infrastructure to do it properly.

The business inside that gap is a B2B SaaS idea with serious teeth. The agentic AI market is projected to surge from $7.8 billion to over $52 billion by 2030, and every one of those agents needs user context to function.

A solo founder or small team can ship the wedge product in 8 to 12 weeks and start generating revenue from prosumers inside of 90 days. This is a micro SaaS idea that scales into infrastructure.

Claude surged to the number one free app on Apple's App Store after Anthropic declined a Pentagon AI contract that OpenAI accepted. Anthropic reported "unprecedented demand" and outages. The memory import tool was designed to reduce friction for the wave of switchers flooding in. But the feature reveals a gap much bigger than helping people change chatbots. Accumulated context — what AI knows about you, your work, your preferences — is becoming the real switching cost in this market. The systems to manage, govern, and transport that context don't exist yet.

The Market Shift

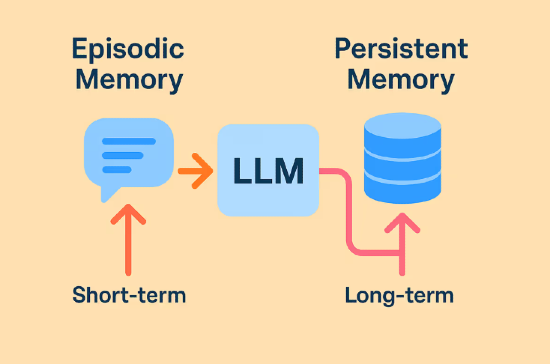

Memory is no longer a niche feature. It's a core product primitive across every major AI platform, and the convergence happened almost simultaneously. Anthropic expanded memory to all users (including free tier) in March 2026. OpenAI has been building persistent memory into ChatGPT since early 2024, with Sam Altman publicly stating it will be central to the product's future. Google documents saved info and personalization controls in Gemini, including enterprise admin settings. Microsoft ships Copilot Memory with admin-level controls.

Every one of them benefits from making it easy to import context and hard to export it. Anthropic's import tool is a copy-paste bridge between two text boxes — no API, no standard schema, no structured transport. The ecosystem has demand before it has infrastructure.

The Insight

The obvious pitch is "build a universal memory sync tool." That will get you killed. Labs can crush a plain export/import utility the moment they decide to care.

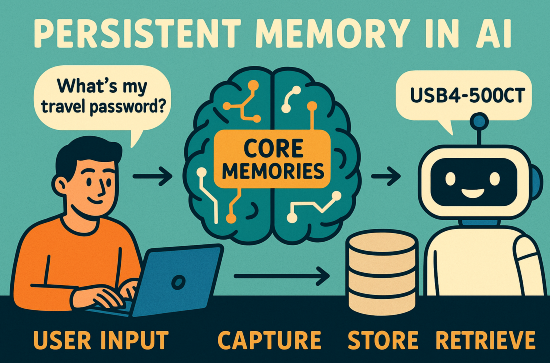

Users don't want one undifferentiated memory blob sprayed across every assistant. They want controlled asymmetry.

Your writing copilot should know your tone, audience, recurring projects, and stylistic preferences. Your travel assistant should know airline loyalty numbers, seat preferences, and passport renewal dates. Your health app should know almost nothing unless explicitly permitted. Your employer's AI should absolutely not absorb your personal life by default.

The category-defining product isn't memory portability. It's memory permissioning: the system that decides what context exists, how it's structured, which assistant gets which slice, what expires, and what's auditable.

Think 1Password for AI context. Okta for assistant permissions. Plaid for context exchange.

This is the same pattern that plays out every software cycle. Once systems start collecting high-value user state, a second layer emerges to organize permissions and trust around that state. Cloud computing created identity and access management. Fintech created data aggregators and consent rails. AI assistants will create context brokers.

The winning company becomes the neutral broker between the human and the growing swarm of AI agents in their life.

Where the Money Is Already Moving

This space is splitting into three layers. Understanding where each sits — and where the gap remains — matters if you want to build something durable.

Layer 1: Model vendors. Claude, ChatGPT, Gemini, Copilot. Their incentive is ecosystem gravity, not neutrality. Anthropic's import tool is unusual because it actively facilitates inbound switching, but that's a strategic move given their current market position — not altruism.

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”