In 2002, the Pentagon launched the most expensive war game in history.

The budget was $250 million. The goal was to validate a sleek, network-centric vision of modern warfare.

They tapped retired Marine General Paul Van Riper to lead the enemy "Red Team." The planners assumed American electronic dominance would leave him paralyzed.

Van Riper didn't wait to be paralyzed.

Knowing his radios were tapped, he went dark—using motorcycle couriers and light signals to coordinate a silent army. Then, he struck. He unleashed a massive, pre-emptive salvo of cruise missiles hidden among swarms of small speedboats.

The sheer volume overwhelmed the Navy’s high-tech Aegis radar systems. By the afternoon of Day 2, sixteen US ships—including an aircraft carrier—were at the bottom of the virtual ocean.

The Pentagon’s response wasn't to adapt. It was to deny.

They paused the game, "refloated" the sunken ships, and forced Van Riper to follow a script that ensured a US victory.

It serves as a brutal lesson in Validated Delusion.

We often design tests to prove we’re right, not to find out where we’re fragile. But in business, a test you can't fail isn't a safety measure—it's a vanity metric.

Right now, Corporate America is busy "refloating ships."

Companies are rushing to deploy AI agents into high-stakes minefields—insurance claims, chargebacks, medical billing—validated only by "happy path" tests. They are building the Blue Team fleet, assuming the enemy will follow the script.

This is your opportunity to sell them the Red Team.

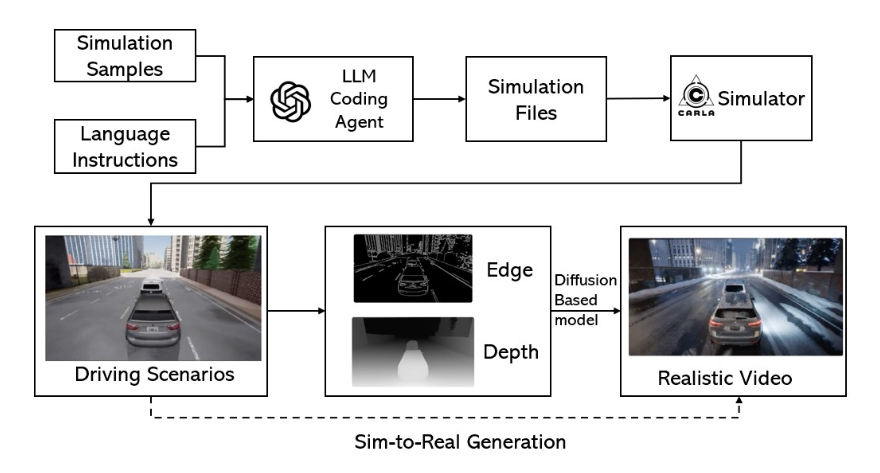

The Scenario Factory is the blueprint for the "safety gate" of the AI era. Instead of selling another generic agent, you use an LLM to generate 300,000 adversarial, constraint-validated scenarios—the "suicide speedboats" of fraud and edge cases—that every model must survive before it touches production.

This is unsexy, invisible infrastructure. It is also a massive moat.

By owning the test suite for a vertical like insurance or payments, you aren't fighting for $20/month seats. You are securing $60k–$150k annual contracts to be the governance layer that stops lawsuits before they happen.

The winner in the agent era won’t be the model. It will be the test.

Read the full playbook here:

Synthetic data crossed from experiment to infrastructure as AI agents ship into liability zones without proper tests, creating demand for constraint-validated scenario engines.

From the Vault:

Pop Mart printed $1.8B selling blind-box uncertainty. Subscription boxes churn at 70% annually. The ones that survive turn surprise into ritual backed by trust.

Dark sky tourism hit $1.47B but reliability remains broken. Verification infrastructure beats discovery filters when hobbyists spend $5K-$15K on gear and drive seven hours.