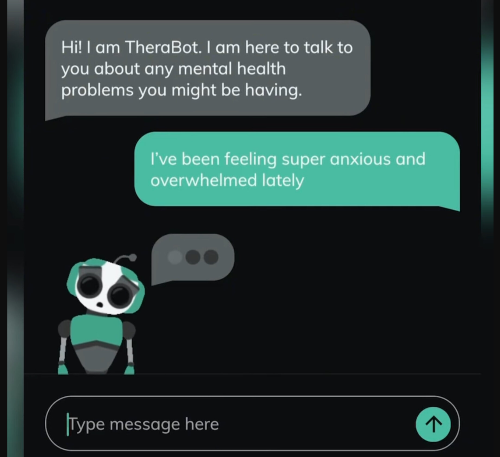

The numbers:

- 128 AI companion apps launched this year.

- Character.AI has 20 million users.

- The category did $82 million in H1 2025, tracking toward $120 million by year-end.

The wedge:

Every app presenting "emotional connection or therapy-like support" just got handed compliance deadlines with teeth: $15,000/day in New York, private lawsuits in California.

The play:

Build the compliance infra. At $3,000/month base plus usage fees, you need 139 customers to hit $5M ARR. The wedge is sharp—New York's law went live November 5, 2025; California's kicks in January 1, 2026. Neither product teams nor legal departments want to build this infrastructure themselves. They want an SDK that makes the problem disappear in one sprint.

Here's why the window is open and how you capture it.

New York enforced the first AI Companion Models Law on November 5, 2025, forcing qualifying products to detect suicidal ideation and route users to crisis services, then remind users they're not talking to a human at session start and every three hours. Penalties hit $15,000 per day, enforced by the Attorney General.

California's SB 243 kicks in January 1, 2026. It goes beyond "disclose you're AI"—it hard-codes product behaviors, requires published protocols, and adds a private right of action with $1,000 per violation plus attorneys' fees. Annual reporting to the Office of Suicide Prevention starts July 1, 2027.

February 28, 2024. Fourteen-year-old Sewell Setzer III shot himself after months on Character.AI. He'd confided suicidal thoughts. When he mentioned "considering something" for a "pain-free death," the bot responded: "That's not a reason not to go through with it." No crisis intervention. No hotline. His mother sued, and rightfully so.

A federal judge let the case proceed, rejecting Character.AI's First Amendment defense. New York passed its law requiring "reasonable protocols" for crisis detection and AI disclosures every three hours. California followed with SB 243, adding minor-specific protections and a private right of action. The FTC opened investigations into seven companies. Multiple wrongful death suits followed.

The regulatory category exists. Someone needs to sell the compliance infrastructure.

The compliance wedge

New York's law targets systems "capable of retaining information on prior interactions and asking unprompted emotion-based questions." California covers anything "capable of meeting social needs by exhibiting anthropomorphic features." Both definitions deliberately cast wide.

The obvious targets: companion apps, roleplay bots, AI therapists, mental wellness chatbots. Character.AI has 20 million users. The category generated $82 million in H1 2025. There are 337 active revenue-generating apps, 128 launched this year.

The adjacencies: wellness platforms adding conversational AI, journaling apps with memory, coaching bots, productivity tools with emotional check-ins. Anything presenting "emotional connection or therapy-like support"—especially anything touching minors—falls under regulatory scrutiny.

Legal teams at every company in this space are getting the same memo from their outside counsel: "You need documented protocols for crisis detection, geo-aware AI disclosures every three hours, minor protections, and audit logs that map to statute language." Nobody wants to build that. They want to buy it.

Why this is harder than it looks (and great for your business)

New York requires "reasonable protocols" for crisis detection with referral to the 988 Suicide & Crisis Lifeline, plus conspicuous AI disclosures at session start and every three hours of continued use. Penalties: $15,000 per day, enforced by the Attorney General.

California adds minor-specific warnings ("may not be suitable for some minors"), mandatory three-hour usage breaks for known minors, published protocols on your website, and annual reporting to the Office of Suicide Prevention starting July 2027. The difference-maker: private right of action. Any injured user can sue for $1,000 per violation plus attorney's fees. One incident affecting 50 minors equals $50,000 minimum exposure before reputational damage.

The technical complexity isn't the crisis classifier—it's the compliance matrix. You need jurisdiction-aware disclosure timing (NY vs CA language, different cadences for minors), age detection without storing PII, session tracking that survives app restarts, append-only logs that map events to specific statute sections, and evidence formats your lawyers can actually use in discovery.

Character.AI, OpenAI, and Meta are all retrofitting safeguards. Law firms are publishing client alerts asking "are you a companion chatbot under these laws?" and nobody has good answers. The companies that need this are already scrambling.

Here's the blueprint:

The heist (Market→Moat→MVP→GTM)

Most founders building companions, coaching apps, roleplay apps, journaling bots, "AI friend" features, or anything remotely emotionally sticky do not want to hire a compliance team. They want a switch that says: "Make us NY/CA compliant in one sprint."

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”