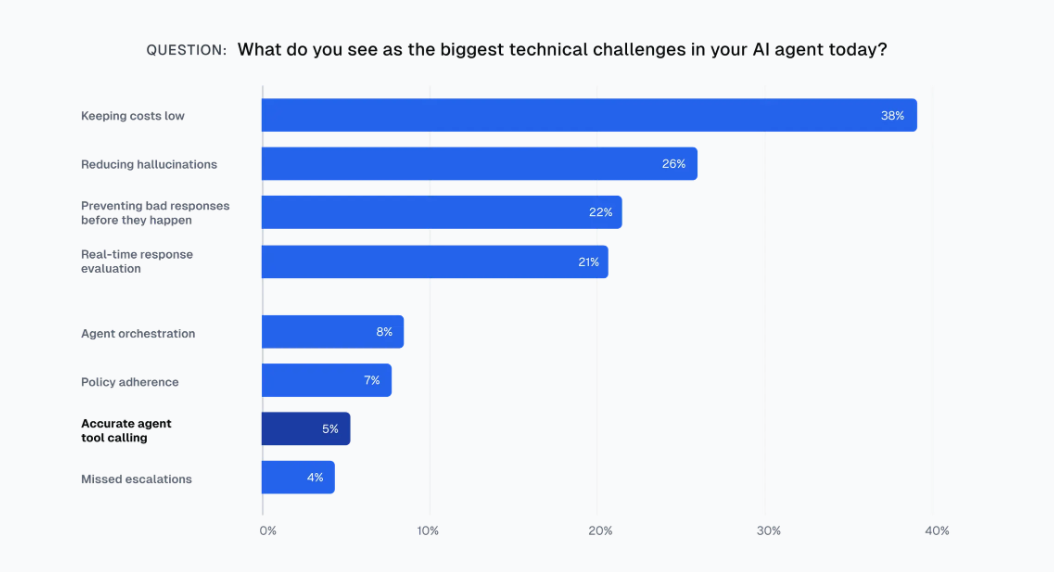

AI agents are shipping into production at a pace the industry can't safely absorb. Enterprise AI spending hit $37 billion in 2025. McKinsey found 62% of companies are experimenting with agents, 23% are already scaling them, and the broader agentic AI market is growing 40–50% annually. Yet a Cleanlab survey of enterprise leaders found fewer than 5% of production agent teams even worry about accurate tool calling — the basic plumbing that determines whether an agent does what it claims.

Model capability isn't the bottleneck anymore. What's missing are repeatable, verifiable environments where agents can fail safely without corrupting real data or burning real money. That gap — agent testing infrastructure — is emerging as a distinct product category inside the multi-billion-dollar AI infrastructure market, and it's growing fast.

This is a micro-SaaS idea a technical solo founder can ship with tiered B2B pricing ($499–$1,500/mo per team) and reach $24K–$50K MRR with 30–50 customers.

A single enterprise deal accelerates that considerably. The buyers already have QA and observability budget. The infrastructure barely exists yet.

Four signals confirm the category is forming. Halluminate (YC S25) builds realistic browser simulators for agent evaluation — one customer saw roughly 20% improvement in date-picking accuracy after training on their flight booking simulator. Anthropic has discussed investing over $1 billion on RL environments; Mechanize, paying engineers $500K, is already working with them. Salesforce launched Agentforce Testing Center, productizing sandbox testing with synthetic data and observability as core agent lifecycle tooling. And Coval ($3.3M seed, backed by YC and General Catalyst) is porting self-driving car evaluation methodology directly to AI agents — founder Brooke Hopkins built Waymo's evaluation infrastructure and recognized the problems were identical.

Agent testing infrastructure is becoming a first-class product category. Here's the playbook.

The Asymmetric Wedge: Agent QA, Not Training Worlds

Two lanes exist in this space. Lane one: sell RL training environments to frontier labs. Lane two: sell testing and evaluation infrastructure to the thousands of companies building agents on top of foundation models.

Lane one is a knife fight. Mechanize and Prime Intellect are well-funded, well-connected, and selling to a buyer pool of maybe a dozen organizations. The talent war alone ($500K salaries for environment engineers) tells you this isn't a solo-builder play. Halluminate is already working with leading computer-use model labs and the largest browser agent companies. That lane is contested.

Lane two is wide open — and it's where the AI startup ideas actually pencil out for small teams.

The buyers are agent-building teams at mid-market and growth-stage companies. They don't need to train foundation models. They need to know that the agent they shipped last Tuesday still works after a model update, a prompt tweak, or a UI change upstream. Their problems are practical (so build/test accordingly):

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”