ChatGPT starts selling ad space inside your conversations. The consumer outrage is your distribution hack—but the real business is AI governance infrastructure for teams who can't afford compromised outputs. Schools, law firms, agencies, and healthcare orgs are already asking "how do we know this answer isn't influenced?" You're about to give them an answer worth $25-40/seat/month.

OpenAI just announced they're testing ads in ChatGPT—starting "in the coming weeks" for free users and the new $8/month Go tier in the U.S.

The ads appear at the bottom of answers, labeled "Sponsored." They won't influence responses, OpenAI says. Your data won't be sold to advertisers, they promise. Personalization will be opt-out, not opt-in.

The history here is straightforward: platforms always start tasteful, then drift toward profitable. Google promised "relevant" ads in 2000. Facebook promised "better user experience" in 2007. YouTube promised "appropriate content" in 2012. They all kept those promises—right up until revenue pressure mounted and the quiet erosion began.

OpenAI is burning $115 billion through 2030. Only 5% of their 800 million weekly users pay for subscriptions—about 35 million people subsidizing hundreds of millions of free riders. The math doesn't work without ads.

Even the $8/month Go plan gets ads. You can pay and still see sponsored content. That's not a pricing tier—it's a new class system in AI, and it creates a business opening.

The Real Opportunity Isn't Blocking Ads

The obvious play is building an ad-blocker for ChatGPT. Fast money? Sure. Durable business? No.

Standard ad-blockers will struggle with native ads embedded in conversational text. You'd need specialized filtering for AI-specific placements. Technically solvable, but three problems kill this as a standalone business:

The platform can break you overnight. When ChatGPT changes how it renders ads (and it will), your selectors break. You're playing catch-up every week.

The giants will absorb this. uBlock Origin, AdGuard, Brave—they'll ship "ChatGPT ad rules" as a weekend update. You're competing against free, battle-tested infrastructure.

ToS and legal risk. You're interfering with someone else's revenue model. That's a lawyer magnet.

An ad-blocker for ChatGPT is a feature, not a company. The real opportunity is one level up.

What Actually Wins: Trust-First AI Infrastructure

The wedge is ads. The business is AI Output Governance.

Once commercial incentives enter conversational AI, every answer becomes suspect—not wrong, just potentially influenced. That uncertainty is poison for trust.

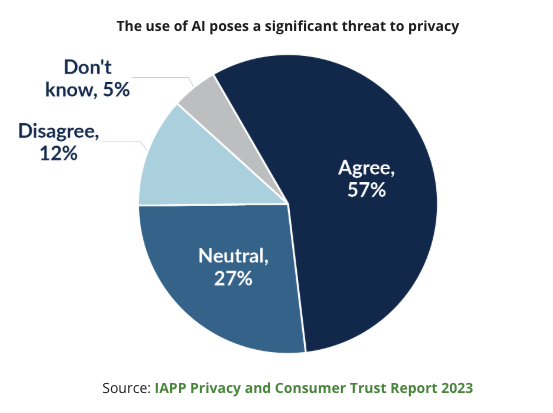

57% of consumers already believe AI threatens their privacy. 62% don't trust AI to make ethical decisions. 81% worry about data misuse. Those numbers predate ChatGPT ads.

The winning move isn't blocking the ad box. It's owning the layer where answers are guaranteed clean.

Critical positioning note: You're not fighting the platforms—you're sitting adjacent to them. You're a paying API customer who adds value on top. This matters legally and strategically. Being "anti-OpenAI" makes you toxic. Being "the governance layer that works with any AI provider" makes you infrastructure. Keep this important distinction in mind when following the playbook below.

The Product: Policy-Controlled AI Access

Build a middleware platform that routes AI requests through your policy engine.

Users don't talk to ChatGPT directly. They talk through your interface, where you enforce rules.

Start with the following 5 modes:

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”