In 1999, Napster taught the music industry a lesson: you can't sue a new distribution primitive into disappearing. You either own the rails, or you become a museum exhibit with great merch.

The same dynamic is playing out again, except this time the primitive isn't free MP3 sharing—it's infinite music generation.

In June 2024, the RIAA sued Suno and Udio for copyright infringement on behalf of Universal, Sony, and Warner. The lawsuits accused the AI music platforms of training models on millions of copyrighted songs without permission, what the labels called infringement on an "almost unimaginable scale."

By October 2025, Universal settled with Udio. Not just settled—partnered. A joint platform launching in 2026, trained on licensed music, with opt-in controls and new revenue streams for artists.

One month later, Warner did the same with Suno. Settlement, partnership, licensed models, revenue splits. Warner even threw in Songkick, its live music discovery platform, to sweeten the deal.

Meanwhile, another AI music company—Klay—reportedly secured licensing deals across all three majors, an early sign the industry is converging on "licensed AI" as a category, not a one-off exception.

The détente is real. The majors aren't accepting AI music. They're productizing the permission. And that creates an opening: agencies and brands deploying AI music need a system that proves their tracks won't blow up a campaign. That verification layer—$249/month for small studios, $999/month for agencies, plus service fees starting at $300 per campaign—becomes the toll booth between generation and commercial deployment.

AI music has a new bottleneck. And it's not creativity anymore. It's proof.

When legal asks "Is this cleared?" you need more than vibes

When Universal settled with Udio in October 2025, the deal included something specific: Udio's platform became a "walled garden." Users can't download tracks from the existing system. Everything stays contained. Why? To prevent AI-generated songs from competing with real artists on Spotify and Apple Music while the licensed platform is being built.

Warner's Suno deal works the same way. New licensed models launching in 2026. Downloads will be restricted under the new framework. The old wild-west era is getting fenced in.

The industry is building guardrails around commercial use. AI music is fine for tinkering. But deploying it in real commerce—ads, podcasts, games, social campaigns—requires something the current tools don't provide: documentation that survives a legal review.

Selling "AI music prompt packs" or "perfect prompts for Suno" means selling recipes in a world where the oven is free and getting better every month. You'll get copied, undercut, commoditized.

The durable business is what your customer can't wing:

- Can I use this track in a paid ad without getting sued?

- Who owns what?

- What model generated this? Was it trained on licensed material?

- If a client asks for indemnity, what do I show them?

- If Spotify requires AI disclosure, how do we comply at scale?

Spotify announced new AI protections in September 2025, requiring metadata disclosure for AI-generated vocals, instrumentation, and post-production via DDEX standards. They've already removed over 75 million spam tracks. Impersonation and undisclosed AI use? Both now violations. The platform is shifting risk to distributors, agencies, and brands—all of whom now need better documentation.

A "compliance-native AI music OS" becomes the real play—a paperwork layer that turns "a track generated by a model" into "a track you can actually deploy in commerce without your legal team having a meltdown."

The industry is begging for a middle layer

Three signals make this more than theory.

The major label partnerships are real—and they're setting new rules

Universal's Udio deal isn't a press release. It's a settlement plus partnership plus roadmap to a licensed platform launching in 2026. Financial terms undisclosed, but Universal CEO Lucian Grainge called it a demonstration of "commitment to do what's right by our artists and songwriters."

Warner's Suno deal follows the same blueprint: settlement, partnership, opt-in artist controls, and compensation for both training data and output usage. Warner CEO Robert Kyncl described it as "a victory for the creative community."

The majors aren't "accepting AI." They're building the licensing scaffolding that turns unauthorized generation into authorized commerce. And that scaffolding needs infrastructure on the buyer side—documentation systems that agencies and brands can actually trust.

AI music is charting—which means it's monetizing attention

In November 2025, an AI-generated country act called Breaking Rust topped Billboard's Country Digital Song Sales chart with "Walk My Walk." The track moved roughly 3,000 downloads at $1 each to hit #1—a low bar, yes, but it still charted. It still converted listeners into buyers.

Aaron Ryan at Whiskey Riff tracked the operation back to AI projects including Defbeatsai. The entire production appears synthetic: AI vocals, AI instrumentation, AI-generated artwork. No traditional human performers involved.

NPR interviewed Ryan about the track. His take: "It's kind of hard to determine who's behind some of these songs... It's kind of a mystery who's behind it and who's doing it."

The market doesn't run on ethics. It runs on distribution plus conversion. Breaking Rust has 2 million monthly Spotify listeners. "Walk My Walk" generated over 3 million streams in a month. The track exists in commerce whether the industry likes it or not.

And commerce needs receipts.

The supply-side licensing economy is forming

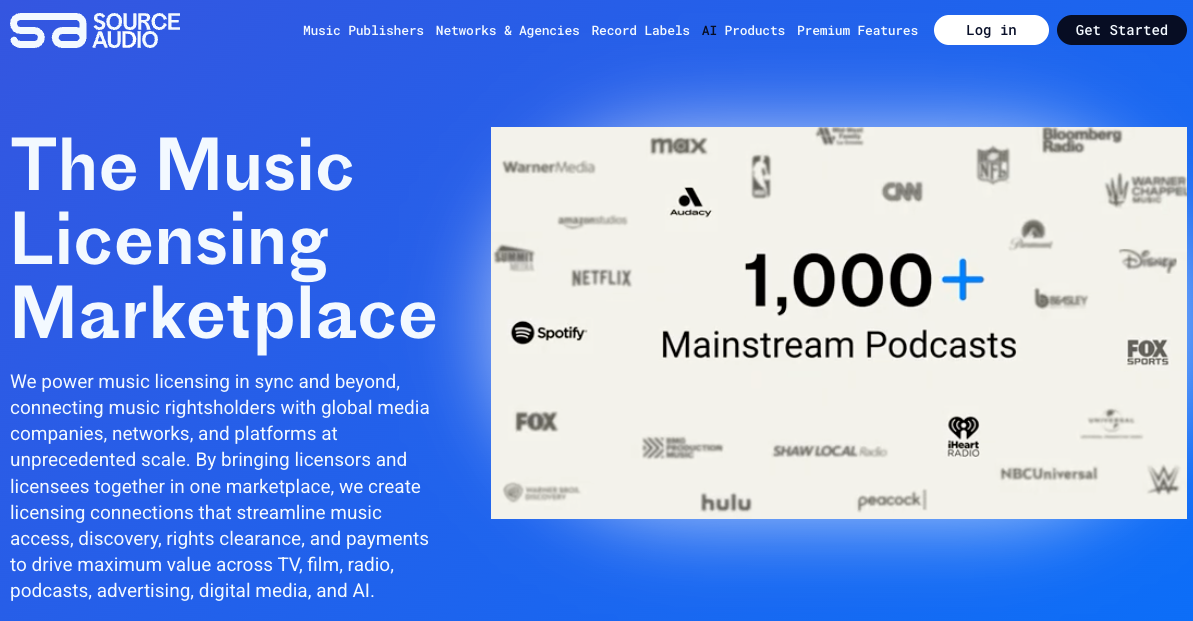

In May 2025, SourceAudio launched what it calls "the first scalable, fully cleared AI music dataset licensing marketplace." The platform offers 14 million+ tracks, 3 million sound effects, and 200 sampled instruments to AI companies through an opt-in model.

In May alone, the marketplace generated over $1.35 million in new annual recurring revenue for rightsholders who opted in. Some catalogs are now earning $100K+ per year from dataset licensing deals.

SourceAudio CEO Andrew Harding said it plainly: "By generating this revenue for our rightsholders, we've proven that responsible AI companies will partner with the music industry when presented with a fully cleared option to train their models."

People are already building the "clean supply chain." They just need the operating system buyers trust.

An "AI Music Passport" that answers the questions legal actually asks

Build a platform that issues machine-readable "AI Music Passports"—a structured certificate with 4 well-defined layers that answers the questions brands and agencies actually care about. Start with the major layer:

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”