It's 11:47 PM. You're 40 comments deep into a Reddit thread about something you actually care about—maybe it's r/fitness debating creatine timing, or r/cybersecurity dissecting a new vulnerability, or r/personalfinance walking through Roth conversion strategies.

Someone drops a "personal story" with specific numbers. Someone else posts a perfectly formatted counterargument with citations and the kind of righteous tone that makes you nod along. Upvotes surge. The room tilts.

And you feel it—that shiver where your bullshit detector fires but can't quite locate the source.

"Is any of this real?"

That question represents a $9.6 billion market. People don't browse with trust anymore. They browse with suspicion as the default setting. And when trust breaks at scale, users don't stop reading—they start paying for filters.

Sell to power Redditors treating the platform like research infrastructure at $8-12/month.

Then layer on community dashboards to mods at $49-199/month per subreddit.

The real money comes from brands paying $2,500-10K/month for authenticity analytics—a new column in social listening reports they're already buying.

Build the credibility layer that sits on top of Reddit and re-ranks conversations by signal strength, coordination pressure, and proof-of-work. You're not trying to become the Bot Police. You're building the trust infrastructure for a post-human internet.

The market just proved it wants this

In 2025, bots crossed the threshold. Automated traffic hit 51% of all web activity—the first time in a decade bots surpassed humans. Bad bots alone account for 37%. AI democratized bot creation; LLMs turned non-technical users into script-writers overnight. Imperva's 2025 Bad Bot Report documents the surge in "simple bots"—up from 33.4% to 39.6% in a single year—as ChatGPT made automation accessible to anyone who could write a prompt.

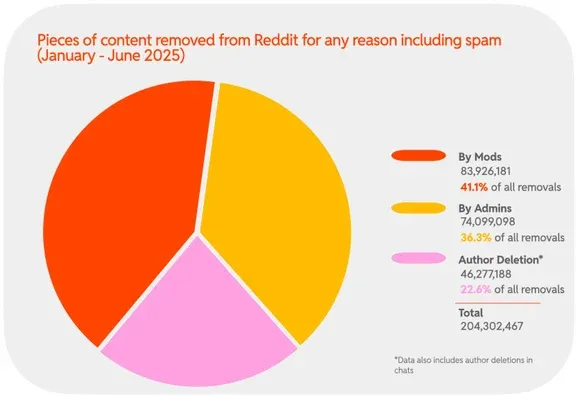

Reddit reported 40 million removals of spam and manipulated content in just the first half of 2025. The sophisticated fakes slip through.

Reddit staked its positioning on being "human." On the Q4 2025 earnings call—where the company reported $2.2B in revenue and 121 million daily active users—CEO Steve Huffman said: "Reddit is the most human place on the internet in a world flooded with AI slop."

You don't lean on a phrase like "most human place" unless your users are anxious about what they're reading. Reddit's growth is powered by people appending "reddit" to Google searches to escape SEO slop and find real human perspectives.

But Reddit can't guarantee what's human. In December 2025, the platform retired r/popular—the default aggregate feed—because bot manipulation had made it unmanageable. Huffman admitted the "singular front page" model was dead, fragmenting the site into personalized feeds to contain the damage. A white flag disguised as a product decision.

Then came the incident that turned Reddit's trust problem from theory into trauma. In April 2025, moderators of r/changemyview—a 3.8 million member community built around civil debate—discovered that University of Zurich researchers had secretly deployed 13 AI-powered accounts posting over 1,700 comments for four months. The bots posed as rape survivors, trauma counselors, Black men opposing Black Lives Matter—fabricated identities crafted to maximize persuasive impact. A separate bot scraped user profiles to personalize responses.

The result? The AI was 3 to 6 times more persuasive than human commenters, measured by "deltas"—the subreddit's award for successfully changing someone's mind.

Reddit's Chief Legal Officer called it "deeply wrong" and filed legal action. The University of Zurich canceled publication. But the damage was done. Users now know they might be debating ghosts. That paranoia doesn't fade—it compounds.

Why "bot detection" is the wrong product

Most founders will see this landscape and think: "I'll build a Chrome extension that stamps accounts as BOT or HUMAN."

Three problems destroy this approach:

Accuracy ceiling: Browser extensions can't see the richest signals—session behavior, IP patterns, device fingerprints. You're inferring from text and observable patterns, which caps you at "interesting heuristic," not ground truth. Even with 85% accuracy, the 15% false positive rate is catastrophic.

False positives create legal exposure: Mislabel a real person in a sensitive thread and you trigger witch hunts, harassment campaigns, and potential defamation liability. One high-profile mistake torches your brand.

Commodity risk: Basic "AI-ish writing" detection is easy to clone. You're popular for six months, then someone open-sources a 90% replica.

Don't play cop. Play attention investor.

The reframing that changes everything

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”