The category comp is the $1.2 billion DAP market (WalkMe, Whatfix, Pendo), projected to hit $4–5 billion by 2033. Nobody has rebuilt that model for ChatGPT, Claude, Perplexity, or Gemini.

A strong founding team can target $500K–$1.5M ARR in year one from Pro users and enterprise pilots, with a clear path to $3–5M ARR as behavioral data compounds. This is an AI startup idea disguised as a productivity tool — and a B2B SaaS play disguised as a coaching product.

Every company now says it is "using AI." The statement has become almost meaningless.

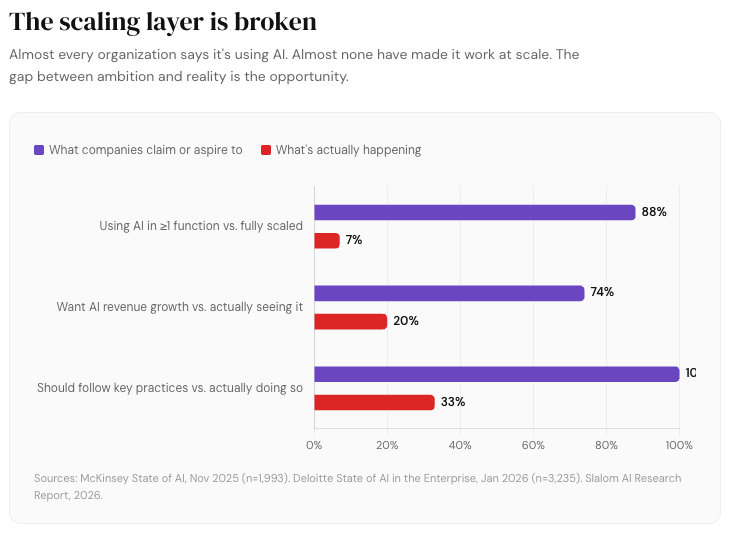

McKinsey's 2025 global survey found 78% of organizations using AI in at least one business function. Deloitte's 2026 survey of 3,235 senior leaders across 24 countries tells a similar story. But the scaling layer is still broken. Only 1% of executives describe their gen-AI rollouts as "mature." Less than a third follow key adoption practices. 74% of organizations hope to grow revenue through AI, yet only 20% are doing so. The AI skills gap is now the single biggest barrier to integration.

What looks like a technology problem is actually a behavior problem. The tool is installed. The employee may even be enthusiastic. But most people still prompt badly, iterate badly, verify badly, and waste time in ways invisible to management. Nobody is measuring any of it.

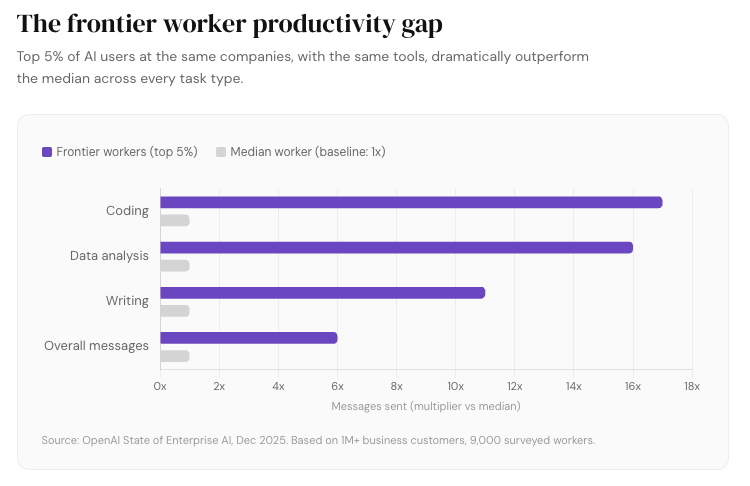

OpenAI's December 2025 State of Enterprise AI report — drawn from over one million business customers and 9,000 surveyed workers — quantifies the damage. "Frontier workers," the top 5% by usage intensity, send 6x more messages than the median employee at the same companies. For coding tasks, the gap explodes to 17x. For data analysis, 16x. Everyone has the same tools. Workers saving more than 10 hours per week consume eight times more computing credits than those reporting no time saved.

The gap exists between organizations too. Frontier firms generate roughly 2x as many AI messages per employee as the median enterprise. For custom GPTs — purpose-built tools that automate specific workflows — the gap widens to 7x. One in four enterprises still hasn't enabled connectors that give AI access to company data, a basic step that dramatically increases the technology's utility.

The market has a gym membership. What it doesn't have is a trainer, a mirror, or a progress dashboard. That missing layer — AI usage analytics with real-time coaching — is a wide-open micro SaaS idea at the low end and a venture-scale infrastructure play at the high end. Whoever builds it isn't selling prompt tips. They're selling measured improvement in how knowledge workers operate with AI every day.

The Behavioral Evidence

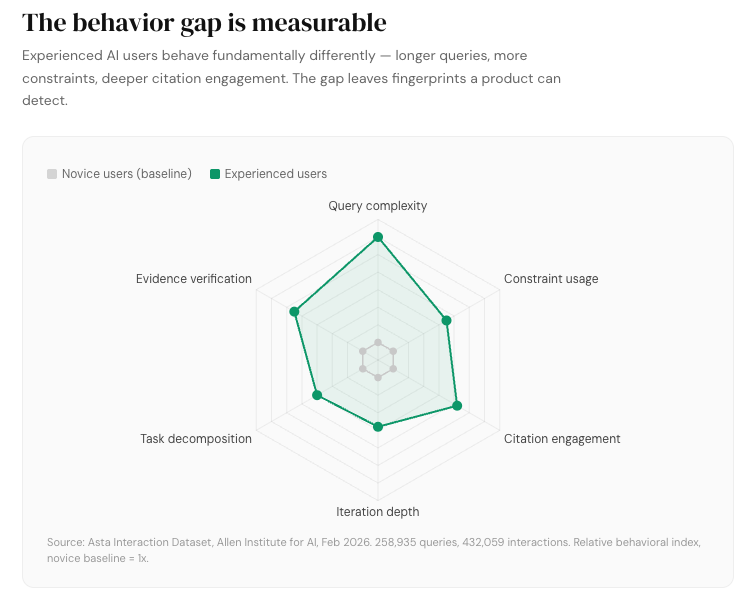

In February 2026, the Allen Institute for AI (Ai2) released the Asta Interaction Dataset — a six-month log of 258,935 real researcher queries and 432,059 clickstream interactions from an AI-powered research assistant integrated with Semantic Scholar. It's the largest open dataset of how people actually interact with AI-powered tools.

Users submit dramatically longer and more complex queries to AI tools than to traditional search — seven times longer, with more entities, relationships, and constraints. They treat AI-generated outputs as persistent artifacts, revisiting and navigating citations nonlinearly. As users gain experience, they become more targeted and engage more deeply with evidence. But keyword-style behavior persists even among experienced users. People import prompt habits from general-purpose chat tools — persona assignment, template filling, collaborative writing requests — into domain-specific platforms. The habits they formed in ChatGPT follow them everywhere.

Asta gives you a real behavioral blueprint for the difference between amateur and advanced AI use. It proves the gap is measurable — and it provides a public, large-scale starting point for the kind of task taxonomy and interaction scoring that a product in this space needs.

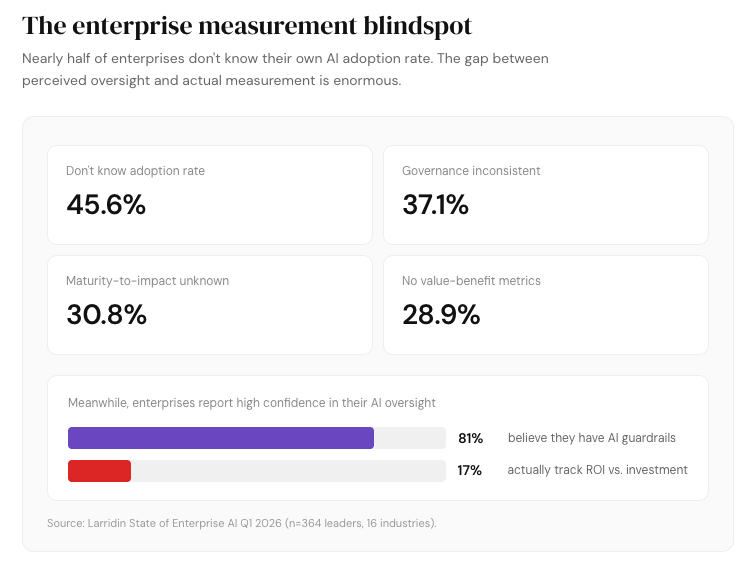

Larridin's 2026 AI Tracker data makes the enterprise side concrete: 45.6% of enterprises say their workforce AI adoption rate is unknown. 30.8% say AI maturity versus impact correlation is unknown. 28.9% cite a lack of clear value-benefit metrics. Companies are spending heavily on AI but can't tell whether it's working.

Why the Existing Solutions Don't Fill the Gap

Most "prompt engineering" products today solve a much shallower problem.

AIPRM has more than 2 million users and 4,000+ curated prompts. PromptBase is a marketplace with 260,000+ prompts. FlowGPT, PromptHero, and a growing list of competitors offer variations on the same theme: libraries, templates, and prompt commerce. These businesses prove demand exists, but they also reveal the category's ceiling. They help users borrow better words. They don't improve user judgment, workflow design, or organizational AI maturity. The gap between a competent AI session and a mediocre one is behavioral. It involves knowing when to decompose a question, when to ask for sources, when to iterate versus restart, and how to evaluate what the model gives you.

On the enterprise side, digital adoption platforms like WalkMe, Whatfix, and Pendo already solve a version of this problem for traditional enterprise software: in-app guidance, behavioral analytics, and contextual learning delivered while employees use Salesforce, SAP, and Workday. The DAP market was valued at roughly $1.2 billion in 2025, growing at 13–19% annually.

Nobody has rebuilt that model for AI tools. The entire infrastructure of usage measurement, contextual coaching, and team-level analytics that exists for legacy enterprise software simply doesn't exist for the AI surfaces where your team now spends hours every day.

The Category: AI Usage Analytics + Just-in-Time Query Coaching

You're selling measured improvement in AI work quality. Not prompts.

"Prompt engineering" is the wrong frame. The phrase sounds tactical, even gimmicky. The more durable frame is capability infrastructure. Companies don't want to buy a course on clever prompting. They want fewer bad outputs, faster first drafts, stronger evidence handling, better compliance behavior, and a way to prove which teams are actually getting leverage from AI. McKinsey's research confirms that the practices most associated with AI value include role-based capability training, embedding AI into business processes, collecting performance feedback, and tracking well-defined KPIs. All of that maps directly to where this business sits.

The category needs a name that lands in a boardroom. "AI usage analytics and in-the-moment coaching" describes the product. "AI capability infrastructure" describes the strategic position.

Product Architecture: Four Layers

A browser extension that says "try adding more context" is a feature. A cross-tool telemetry and coaching layer is the beginning of a company.

Here's how to build the latter:

Unlock the Vault.

Join founders who spot opportunities ahead of the crowd. Actionable insights. Zero fluff.

“Intelligent, bold, minus the pretense.”

“Like discovering the cheat codes of the startup world.”

“SH is off-Broadway for founders — weird, sharp, and ahead of the curve.”